Latest Stories

- Student Activities Fees: What Its Approved Increase Means for Students

- Student Senate Square

- How to Talk with Your Roommate

- Circulating the Sports Sphere: Spring 2024

- NJIT Researchers Find a Way to Efficiently Detect ‘Forever Chemicals’

- Experiences as Women at NJIT

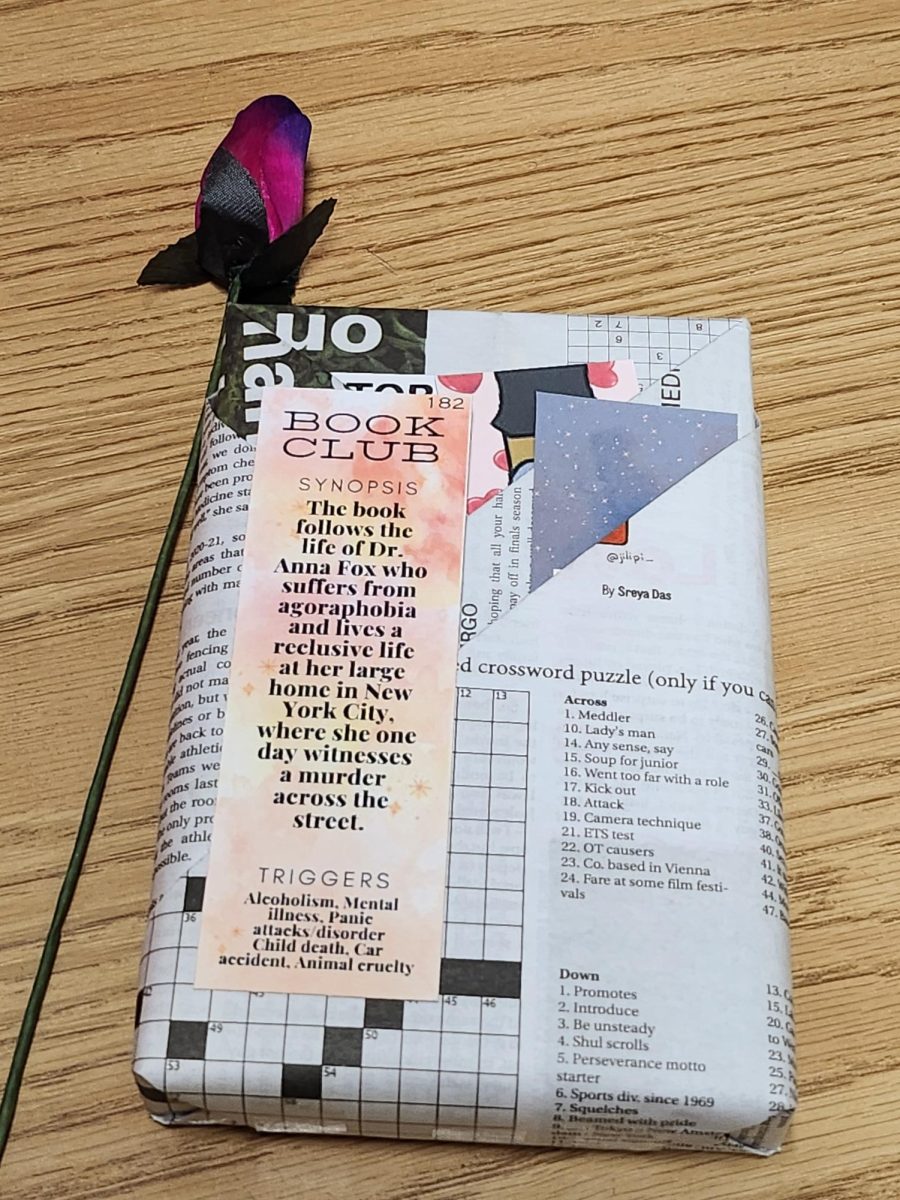

- How Blind Date with a Book Blossomed

- Make Democracy Work — Vote in the Student Senate Elections

- National Guard Deployed in NYC Subways: A Commuter’s Concerns

- The Top Three Tech Fails of 2023